Picture typing a few words and witnessing them transform into a top-notch video with no cameras, no actors, just pure AI at play.

That’s the magic of OpenAI’s Sora, an innovative text-to-video model that can produce lifelike, cinematic scenes in mere seconds.

Whether you’re a content creator, marketer, or simply an AI enthusiast, Sora is poised to redefine the way visual content is generated.

In this post, we’ll delve into what Sora is, how it functions, and how you can leverage it to bring your concepts to life.

What is Sora?

OpenAI’s Sora is an advanced AI video generation model that converts text, images, and videos into fresh, dynamic video content.

Designed to democratize video creation, Sora empowers users to craft high-quality videos without the need for traditional filming equipment or extensive editing skills.

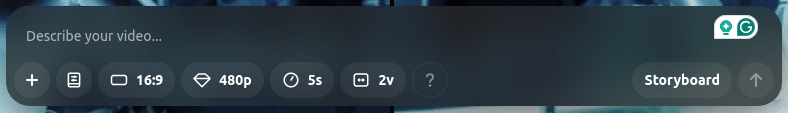

Sora is accessible through ChatGPT subscription plans, with ChatGPT Plus offering up to 50 priority videos per month at 720p resolution and 5-second durations. On the other hand, ChatGPT Pro provides up to 500 priority videos at 1080p resolution and 20-second durations, along with added perks.

Features of Sora:

1. Text-to-Video Generation

Sora can transform written descriptions into immersive video content. Users can bring their creative vision to life using a prompt.

Example:

A user inputs the prompt: “A stylish woman strolls along a Tokyo street lined with warm glowing neon lights.”

Sora interprets this description and generates a video showcasing the scene with intricate details, capturing the city ambiance and neon lights.

2. Image-to-Video Conversion

In addition to text prompts, Sora enables users to upload images, which it then animates into captivating video sequences.

Example: Uploading a still image of a serene beach at sunset, Sora can create a short video where gentle waves lap the shore, seagulls fly across the sky, and the sun gradually sets below the horizon.

3. Video Remixing and Blending

Sora allows users to enhance and modify existing videos by blending them with new elements or styles, fostering creative experimentation.

Example: A user uploads a cityscape video and selects a “cyberpunk” style preset. Sora remixes the original footage, adding a futuristic neon color scheme, holographic billboards, and a dark ambiance inspired by traditional cyberpunk imagery.

4. Aspect Ratios and Resolutions

To cater to various platforms and purposes, Sora supports multiple aspect ratios and resolutions.

Example: A content creator needs a vertical video for a social media story. With Sora, they can generate a 9:16 aspect ratio video with 1080p resolution, ensuring the best quality and compatibility for the platform.

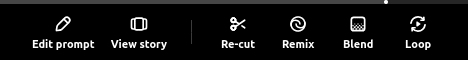

5. Creative Tools

- Remix: Modify existing videos by changing elements like color schemes, backgrounds, or visual effects.

Example: Transform a daytime landscape video into a nighttime scene with a starry sky and ambient moonlight.

- Storyboard: Visualize and plan video sequences by arranging scenes or keyframes.

Example: A filmmaker outlines a short story by creating a sequence of scenes, each representing a different part of the narrative, to preview the flow before the final generation.

- Re-cut: Trim or extend segments within a video to focus on specific moments or adjust pacing.

Example: Shorten a lengthy introduction or highlight a particular action sequence by trimming surrounding content.

- Blend: Seamlessly merge two videos to create a cohesive transition or combined scene.

Example: Blend a clip of a person walking into a forest with another of a mystical creature appearing, creating a smooth transition between the two scenes.

- Loop: Create seamless, repeating video loops ideal for backgrounds or continuous displays.

Example: Generate a looping animation of a rotating planet, perfect for use as a dynamic background in presentations.

6. User-Friendly Interface

Sora’s platform is intuitive, allowing users of all technical backgrounds to easily navigate and utilize its functionalities.

7. Content Moderation and Safety

To promote responsible use, Sora incorporates robust content moderation features:

- Watermarks and Metadata: All AI-generated videos include visible watermarks and metadata to indicate their origin, ensuring transparency.

Example: A generated video displays a subtle watermark in the corner, denoting it as AI-created content, helping viewers distinguish it from real footage.

- Depiction Restrictions: Sora limits the generation of realistic human appearances to prevent potential misuse, such as deepfakes.

Example: Attempts to create videos depicting specific individuals are blocked, safeguarding against unauthorized likeness replication.

By incorporating these features, Sora empowers users to efficiently produce high-quality, creative video content while upholding ethical standards and user safety.

Step-by-Step: How Does OpenAI’s Sora Model Work?

1. Input Processing

Prior to generating a video, Sora processes the input provided by the user. This input can be text, images, or existing videos.

A) Text-to-Video Input

- The user provides a detailed text prompt describing the desired video scene.

- Sora’s natural language processing (NLP) module interprets the text, breaking it down into key elements such as:

- Objects (e.g., “a cat, a red car”)

- Actions (e.g., “running, jumping, swimming”)

- Environment (e.g., “a rainy street in Tokyo, a futuristic city”)

- Artistic Style & Mood (e.g., “cinematic, neon-lit, realistic”)

Example:

A user inputs: “A golden retriever runs through a field of wildflowers with the sun setting in the background.”

Sora identifies the dog, the field, the motion of running, and the lighting conditions of a sunset to generate a relevant scene.

B) Image-to-Video Input

- Users can upload an image as a starting point.

- Sora analyzes the image to extract:

- Color palettes (e.g., warm tones of a sunset, vibrant city lights)

- Textures & Materials (e.g., grass, water, fabric)

- Perspective & Depth Information

Example:

A still image of a beach at sunset can be turned into a video with waves crashing, birds flying, and the sun slowly setting.

C) Video-to-Video Input (Remixing & Enhancement)

- Users can upload a video that Sora will enhance, extend, or modify.

- The model analyzes movement, frame consistency, and transitions to maintain coherence.

- Users can request style changes, add objects, or modify backgrounds.

Example:

A daytime cityscape video can be transformed into a cyberpunk night scene with neon signs and rain reflections.

2. Latent Space Representation

Once the input is processed, Sora encodes it into a latent space. This step translates the input into a high-dimensional numerical format that captures key details like:

- Object relationships

- Motion patterns

- Color schemes and textures

- Perspective and depth

This process compresses information while preserving the structure needed for video generation.

Example:

The phrase “a futuristic car speeding through a neon-lit highway” is transformed into a numerical format that helps the AI generate consistent video frames.

3. Diffusion Model Processing

Sora utilizes diffusion models to generate video frames from scratch. This involves:

A) Noise Addition (Reverse Engineering the Image)

- The model starts with random noise (similar to static on a TV screen).

- It gradually removes the noise while shaping the pixels to match the prompt.

B) Iterative Refinement

- Through multiple steps, the AI adds details, enhances textures, and improves clarity.

- The process ensures temporal consistency, meaning objects and actions remain smooth across frames.

Example:

For the golden retriever running in a field, Sora ensures:

- The dog’s fur flows naturally with the wind.

- The shadows move consistently as the sun sets.

- The background remains steady, avoiding glitches.

4. Transformer Model for Temporal Consistency

Unlike static image generators, video AI must handle motion. Sora integrates transformer-based architectures to ensure:

- Consistent object placement (so the same cat doesn’t change shape in different frames).

- Realistic motion physics (like the way hair moves in the wind).

- Frame coherence (so there’s no flickering or weird jumps).

Sora achieves this by analyzing:

- Sequences of frames to understand movement.

- Attention mechanisms that focus on important elements like a person’s face, a moving car, or flowing water.

Example:

For a video of a dancer performing on stage, Sora ensures:

- The outfit moves naturally with the dance.

- The stage lighting changes smoothly.

- The dancer’s movements don’t glitch between frames.

5. Video Synthesis and Output Generation

Once Sora refines the video, it assembles and enhances the final output.

A) Frame Assembly

- The AI combines multiple video frames into a smooth sequence.

- It adjusts frame rates (e.g., 30 FPS, 60 FPS) for high-quality motion.

B) Post-Processing

- Color correction and lighting adjustments for realism.

- Stabilization and sharpness enhancement for crisp details.

- Final resolution selection (HD, 4K, etc.).

Example:

A forest scene at dawn might undergo:

- Brighter contrast adjustments to match the early morning light.

- Smoother tree movements in the wind.

- Higher-resolution textures for added realism.

6. Content Moderation & Safety Features

Sora is designed with ethical considerations to prevent misuse. The model:

- Adds watermarks and metadata to indicate AI-generated content.

- Restricts highly realistic human deepfakes to prevent fraud.

- Monitors input prompts to block inappropriate content.

Example:

If someone attempts to generate a fake video of a celebrity, Sora will block or alter the request to prevent misuse.

By following these steps, Sora creates high-quality, dynamic videos that surpass the boundaries of AI-powered video generation.