Effective decision-making is a crucial responsibility for any decision-maker. It is essential to know when to rely on artificial intelligence (AI) and when to trust our intuition. AI systems are becoming more integrated into various aspects of our lives, including decision-making processes for both small and large companies. While AI excels at processing data and identifying patterns that may elude human perception, it is not flawless.

Therefore, understanding when to trust AI versus human judgment is vital for making optimal decisions. This article delves into the decision-making processes of humans and AI, particularly in the realm of computer vision. It also compares the outcomes of incorporating AI using different criteria. Let’s delve into this discussion.

Understanding Decision-Making Processes

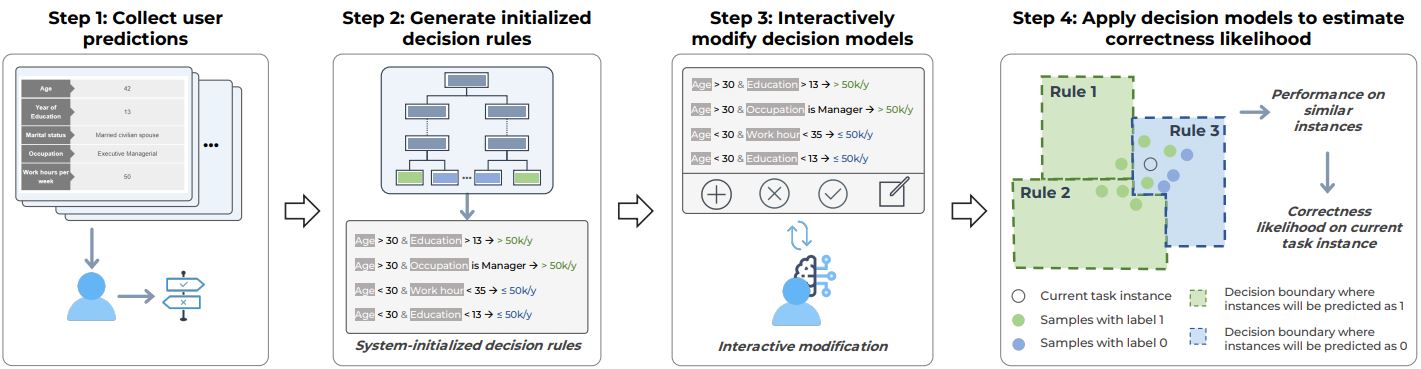

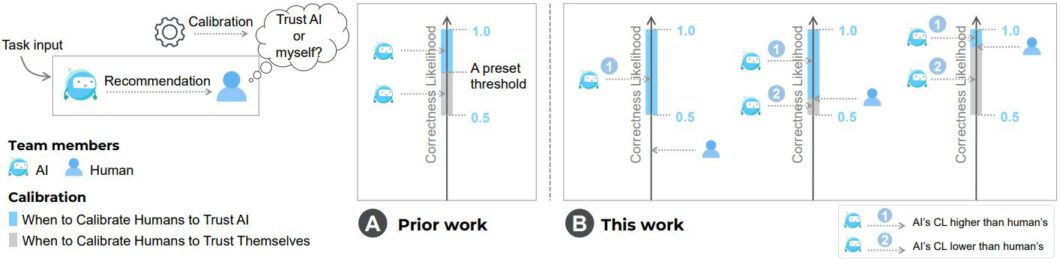

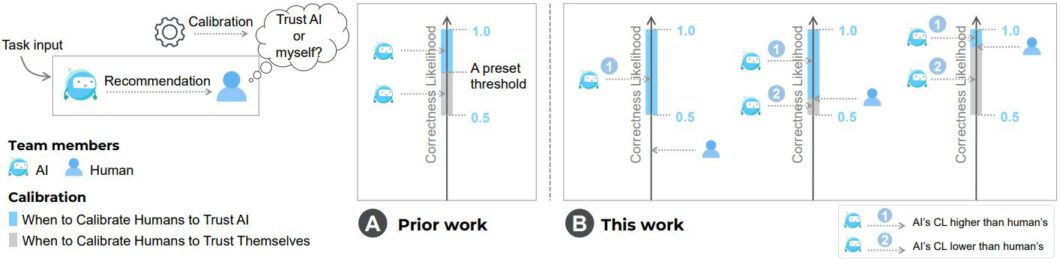

In critical decision-making scenarios, humans and AI systems follow distinct processes to arrive at decisions. The correctness likelihood (CL) metric enables researchers to evaluate the accuracy of decision-making processes. CL measures the probability of making the right decision in a given situation. AI relies on algorithms and learning patterns from training data to draw conclusions, while human decision-making involves intuition, past experiences, and contextual understanding, which are challenging to quantify.

While the CL metric is more suited for artificial intelligence systems, as they can provide confidence scores for their decisions based on statistical analysis, measuring the CL for humans requires a deep understanding of our critical decision-making process.

How AI Makes Decisions

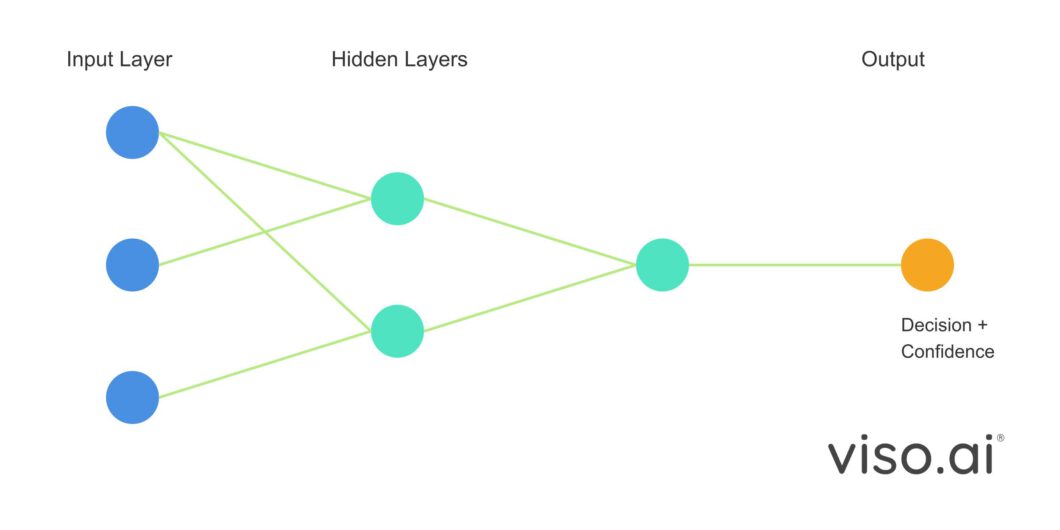

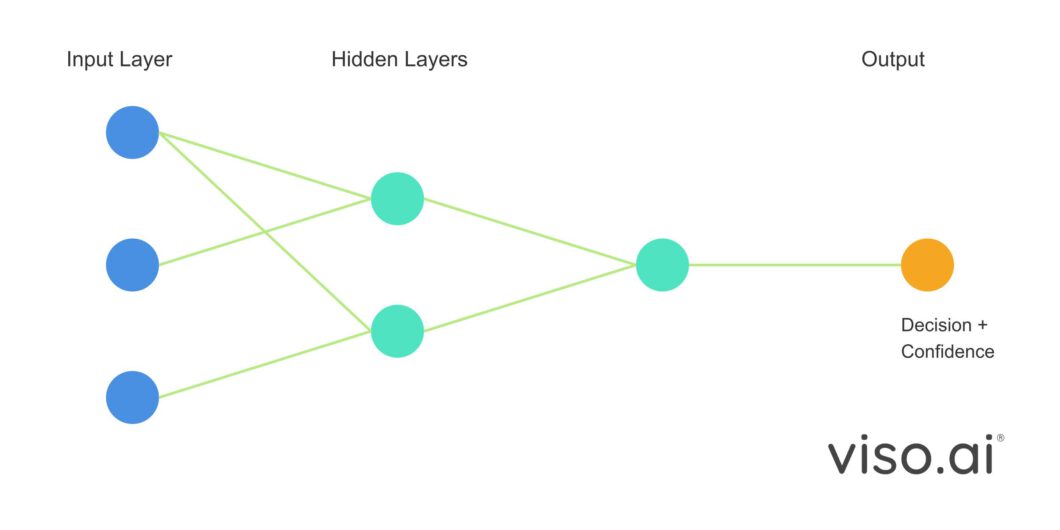

Artificial Intelligence models, composed of artificial neural networks (ANNs), make decisions through pattern recognition and statistical analysis. These models are trained on extensive datasets to learn patterns and make predictions. AI approaches decision-making differently from human reasoning, relying on mathematical algorithms and learned data representations.

Modern AI systems, particularly computer vision and large language models, utilize various forms of artificial neural networks to process information. Here is the decision-making process for AI systems:

- Input Processing: The model receives and converts data (e.g., images or text) into a processable format

- Feature Extraction: Neural networks identify relevant patterns and features in the input

- Pattern Matching: These features are compared against learned patterns during training

- Output Generation: The model produces a decision along with a confidence score

However, the explainability of AI is a crucial consideration, as understanding why an AI system reaches a specific decision is important. Neural networks are often perceived as black-boxes, making it difficult to explain their decisions. Researchers are incorporating explainability techniques in modern AI systems to enhance transparency in the decision-making process.

Human Decision-Making Process

Humans make decisions through a complex process involving analytical thinking, intuition, and past experiences, making measuring human correctness likelihood (CL) more challenging compared to AI confidence, especially in computer vision tasks. While computer vision models can provide clear confidence scores for their predictions, measuring human accuracy is more intricate.

- Past Experience: Humans rely on previous visual experiences

- Pattern Recognition: Humans search for familiar visual patterns and features based on past experiences

- Analysis: Combining experience with visual input

- Final Decision: Making a prediction based on gathered information

While computer vision models can provide confidence scores for their predictions, measuring human accuracy is more complex. Research shows that humans often struggle to accurately assess their own confidence in visual tasks, indicating the need for a well-defined process to measure human CL.

- Comparing predictions to known ground truth data

- Analyzing performance on similar visual tasks

- Studying decision-making patterns across multiple cases

- Calculating the Correctness Likelihood (CL) based on past performance

Understanding when to trust human visual analysis versus computer vision models is crucial for critical decision-making. The subsequent section will offer a thorough comparison between AI and humans in visual decision-making.

Trusting AI vs. Humans: Key Criteria

Various factors must be considered when deciding between trusting AI or human decisions, especially in critical vision tasks. Recent research highlights the imperfections of both AI and human capabilities. While AI systems may make mistakes despite high-confidence scores due to factors like bias or overfitting, humans can also err due to overconfidence or bias.

It is essential to assess the strengths of AI versus humans in critical decision-making across criteria such as speed, accuracy, adaptability, and accountability for errors. By comprehending these critical aspects, one can determine which tasks are better suited for humans and which are better handled by AI. This understanding can also pinpoint areas where a blend of AI and human intelligence could yield optimal results. Let’s delve into these critical factors to ascertain when to trust AI and when to trust human judgment in visual tasks.

Processing Speed and Efficiency

AI systems have a notable advantage in processing speed compared to human capabilities. Computer vision models can analyze hundreds or thousands of images per second, whereas humans require significantly more time to carefully process visual information. While AI’s rapid processing is advantageous, research indicates that it could lead to oversight.

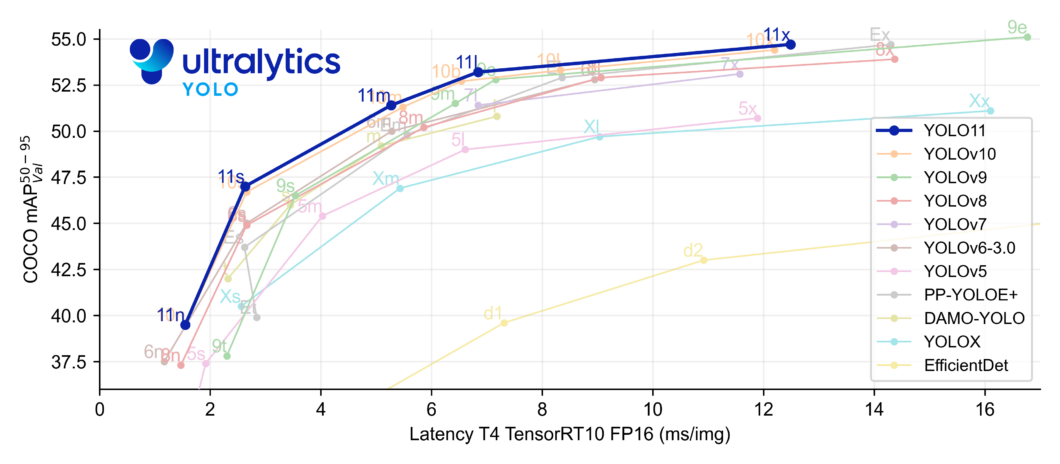

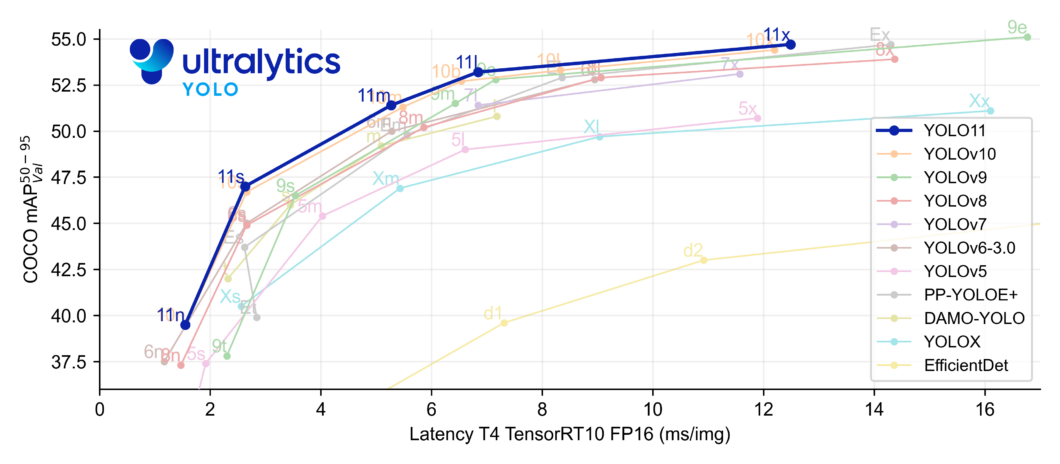

For instance, YOLO models, popular in critical decision-making scenarios, exhibit varying performance based on their size. Larger models offer higher accuracy but with increased latency, while smaller models provide lower latency but less accuracy. In scenarios where AI assists in decision-making, such as in solar panel maintenance, human oversight is essential. When AI’s confidence is low, humans should be allowed more time for analysis, promoting deeper analytical thinking, especially in tasks like medical diagnosis.

Accuracy and Reliability

Neither humans nor AI systems are infallible when it comes to accuracy in visual tasks. While computer vision models can achieve high accuracy on benchmark datasets, their performance may falter in real-world situations due to issues like overfitting. Similarly, humans can excel in familiar visual tasks but are susceptible to fatigue, bias, and inconsistency.

Human accuracy is subject to various conditions and mental states. Humans often exhibit poorly-calibrated self-confidence, leading to situations where they may be confident in incorrect decisions or unsure about correct ones. Maximizing accuracy involves knowing when AI or humans are more likely to be correct. AI tends to excel in repetitive visual tasks, well-defined pattern recognition, and high-volume inspection tasks. On the other hand, humans shine in novel or problem-solving scenarios, complex contexts, and adapting to unexpected variations.

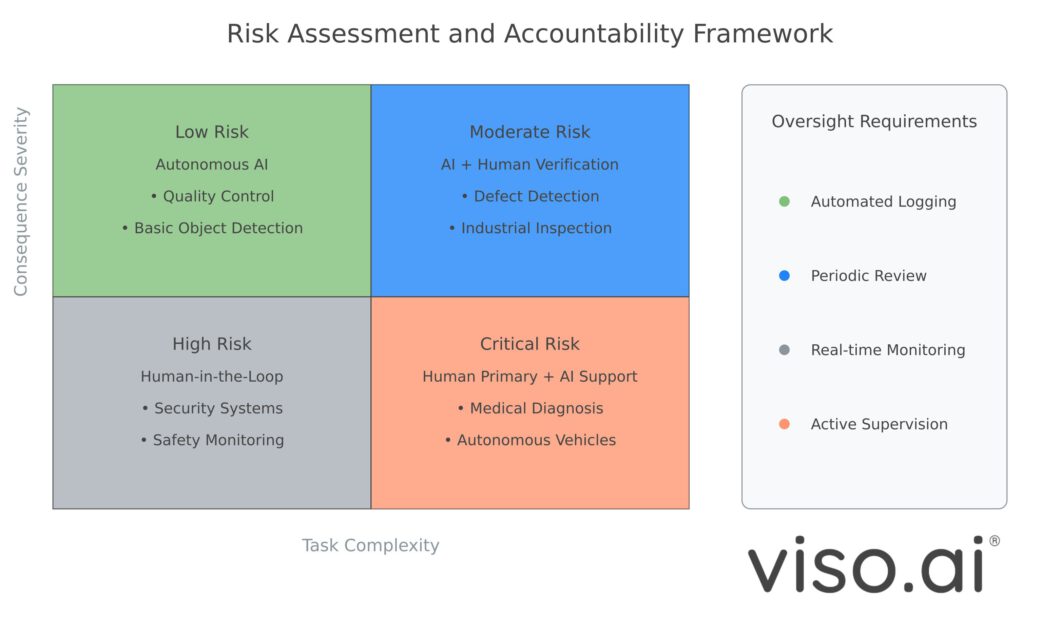

Risk and Accountability

When employing computer vision systems for critical decision-making, assessing risk and accountability is paramount. While AI systems can process images swiftly and accurately, mistakes can have severe repercussions for companies. In scenarios where AI shows high confidence in incorrect predictions, the risk is heightened. AI systems lack the intuitive explanation capabilities of humans, making it challenging to pinpoint the cause of errors.

In high-risk scenarios, it is crucial to define the roles of computer vision models and their decision impacts. Establishing clear rules to prevent critical errors is essential. Some example rules for critical computer vision tasks include:

- Regular validation of AI predictions by human experts

- Established protocols for low AI confidence scenarios

- Defined responsibility chains for decision-making

- Documentation of the decision-making process

Striking the right balance between leveraging AI capabilities and human oversight in high-risk scenarios is key. Moving forward, let’s explore hybrid approaches for integrating computer vision and human intelligence effectively in decision-making.

Hybrid Human-AI Approaches in Critical Decision-Making

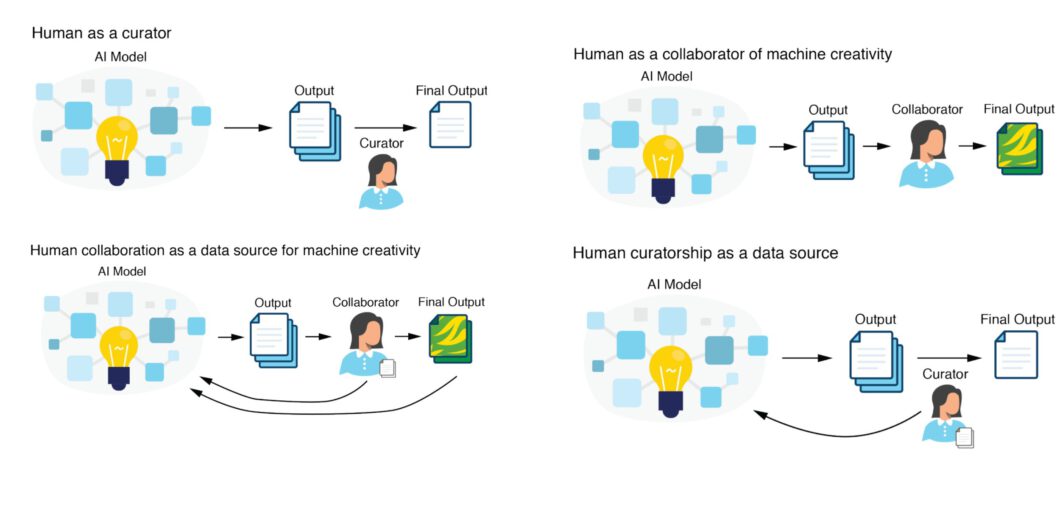

The future of critical decision-making lies in harnessing the strengths of both AI and human intelligence rather than choosing one over the other. By employing hybrid approaches that combine computer vision capabilities with human expertise, organizations can establish more dependable decision-making systems. These hybrid approaches leverage the strengths of both AI and human intelligence, resulting in an effective collaboration between humans and computer vision.

Establishing Effective Human-AI Teams

To create successful hybrid approaches, organizations must design workflows tailored to utilize computer vision and human experts to their fullest potential. Computer vision models can swiftly process vast amounts of data and detect potential issues, while human experts can provide contextual assistance and handle uncertain scenarios where AI falls short. A common strategy is to use AI for initial analysis or screening, with humans acting as supervisors or reviewers. For instance, in manufacturing quality control, computer vision models can monitor production lines and flag potential defects, which human experts can then review for final decisions based on their expertise.

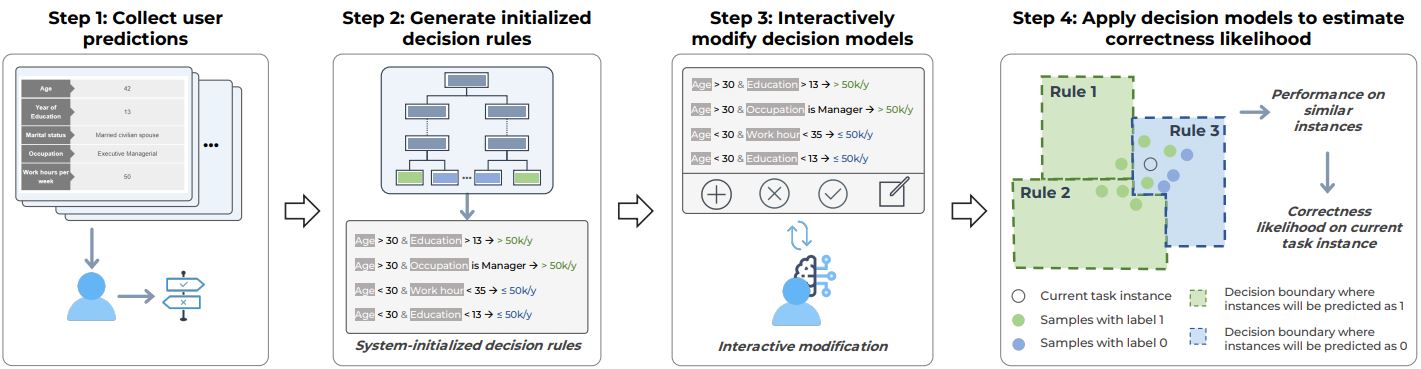

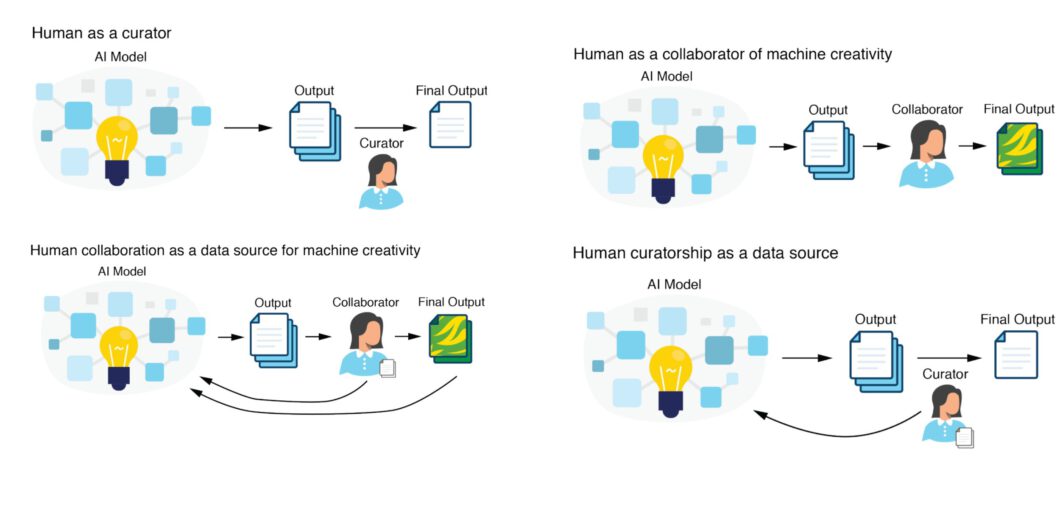

To create effective hybrid approaches in critical decision-making between computer vision models and humans, organizations can implement various methods such as:

- AI-Assisted Detection: AI detects potential objects or anomalies, validated by humans for final decisions

- Human-in-the-Loop: Humans provide contextual guidance for AI to enhance output and learning

- Expertise Augmentation: AI offers additional analysis to human-made decisions, providing diverse perspectives for each decision

- Collaborative Annotation: Humans label training data to enhance AI’s detection accuracy

Future of AI-Assisted Critical Decision Making

The future of critical decision-making is not about choosing between AI or humans but about effectively combining their capabilities. As computer vision technology advances, the key to success lies in upholding ethical considerations and maximizing the benefits of human and machine intelligence integration. The most successful approaches will ensure human accountability while leveraging AI’s processing power.

In the future, we can anticipate even more robust hybrid systems tailored to different scenarios and risks. However, ethical considerations should remain a top priority when implementing these systems. As research continues to integrate computer vision into critical decision-making, transparency, fairness, and accountability should guide any hybrid system design.